- Blog

- Radi 8 delta 8

- Bwana makuba

- Never have i ever season 3 release date

- Fallout 4 lone wanderer

- Iina player m1

- Best dog id tags

- Hyperplan equation

- Peakhour for driving uber in northeast ohio

- Ps4 driveclub gameplay reviews

- Shotcut for mac to sleep

- Unable to start livereload serve

- Burn for mac download free

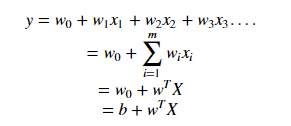

If C=0 it means no observation has violated the margin. Now consider the role of variable C, it is the sum of all the slack variables for all n observations. If slack variable is greater than 1 then “i th” observation is on the wrong side of the hyperplane. If the slack variable is equal to zero then it means that “i th” observation is on correct side of margin and if slack variable is > than 0 then it means “i th” observation is on wrong side of margin. Meaning, if you move vectors, the classifier would change its margin, but if you move all other observations the margin wouldn’t change. Interestingly, this classifier simply depends upon those vectors and not on all other observations available in the training set. Those touching points are called vectors because they are vectors in p-dimensional space. They are defining the length of the margin, farther those points are, farther away those two lines are from the middle hyperplane. Those lines are called margins, and the observations touching those two lines are called vectors. The way maximal margin classifier looks like is that it has one plane that is cutting through the p-dimensional space and dividing it into two pieces, and then it has two lines, each on one and other side of that plane. Source : Link, Question Author : F.C.In simple words, for each testing observation we put all the variables in the equation above and decide which side of the hyperplane that particular observation belongs to, based on the sign of f(x). Also, suppose that all examples you want to classify are represented as feature vectors, where an individual feature vector is denoted as $\mathbf \theta)$ called statistical learning theory. For simplicity, suppose you have two classes: $c_1$ and $c_2$. Based on your question, I assume you are using an SVM for classification. The answer to this question is a bit more subtle. I don’t understand why they are using three different names? Same equation have three different names: hyperplane, hypothesis function and linear classifier.

The word “bias” in this context is the same as the intercept. I don’t understand why they are using three different names? Answer The job of this function is to predict label of a given data x.Īgain, as this hypothesis function use hyperplane equation which produced linear combination of the values, this function is also called linear classifier. Now, from hyperplane equation they got a function called as hypothesis function: I don’t understand why they calling b or c as a bias? They were supposed to be call intercept. Immediate above line I wrote exactly from that book. So, here bias c of line equation is equal to bias b of hyperplane equation when w1 = -1. If we define a and c: a = -(w0 / w1) and c = -(b / w1) We isolate y to get: y = -(w0 / w1)x - (b / w1) This is equivalent to: w0x + w1y + b =0 is equal to w1y = - w0x - b Let vector w = (w0, w1) and x = (x, y) and b, we define a hyperplane having equation: Now, We can see the relationship between line equation and hyperplane equation. Now, logically if it is an equation of hyperplane then it is true that all points lies on hyperplane must satisfy above equation. I understood how did i end up to the hyperplane equation: w. I was reading a book named Support Vector Machines Succinctly written by Alexandre Kowalczyk.